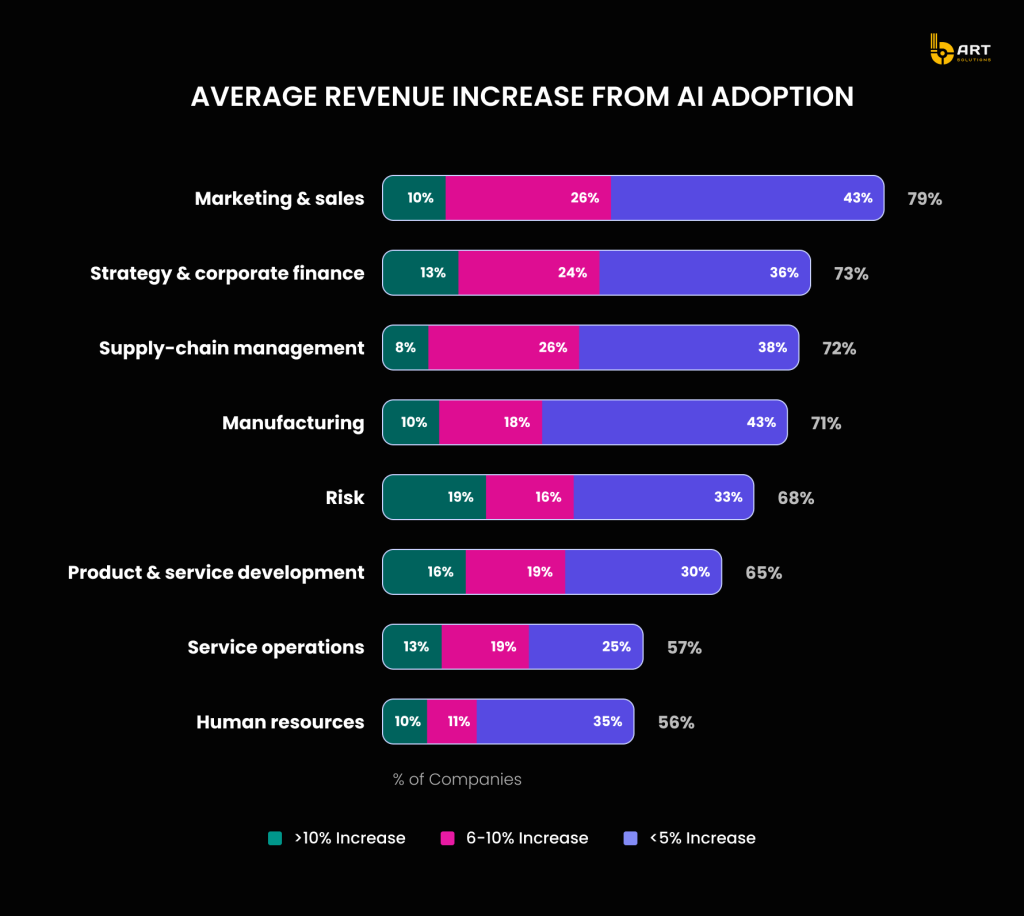

Artificial intelligence (AI) and machine learning (ML) are transforming industries, driving innovation, and creating new opportunities for businesses worldwide. The global machine learning industry is projected to grow at a CAGR of 38.8% between 2024 and 2029, with 61% of marketers prioritizing ML and AI in their data strategies. As 82% of companies seek employees with ML skills and 74% of leaders believe in the potential of increased investment in these technologies, the importance of integrating AI is clear.

In this article, the process of building AI-powered applications using .NET will be explored.

If you have an AI project in mind and need an expert team to bring it to life, contact bART Solutions.

The Importance of AI Solutions using .NET

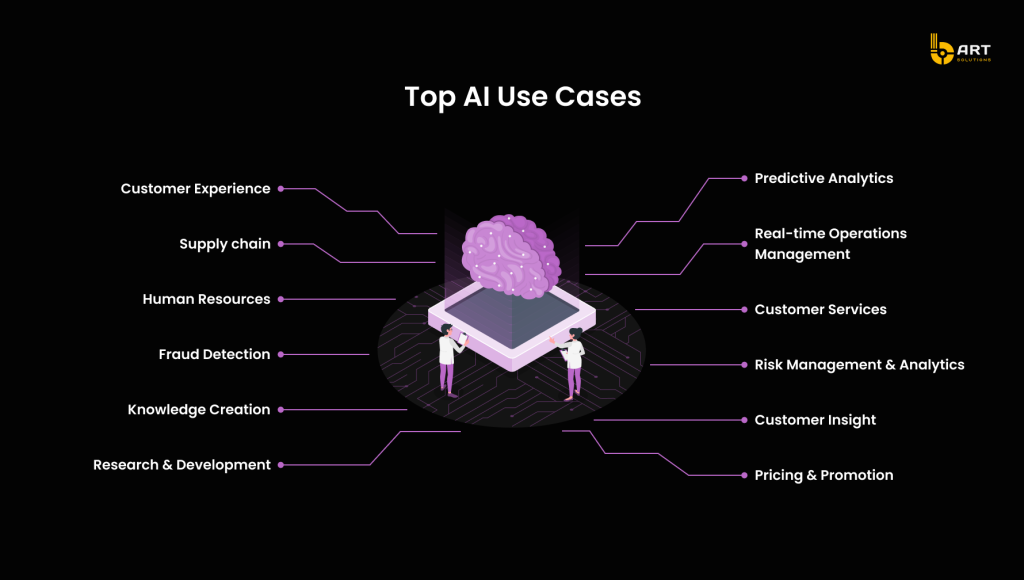

AI and ML technologies are the backbone of modern apps, driving efficiency, enhancing user experiences, and providing critical insights. .NET AI solutions and machine learning services are at the forefront of these changes, offering powerful tools and frameworks to build intelligent, responsive, and scalable software.

Key Benefits of .NET AI-Driven Apps

Process Automation

.NET applications leverage AI and ML to manage various systems through a centralized database, such as transferring customer data from different systems to a unified server.

Predictive Analytics

Integrating AI algorithms with .NET applications enables the detection of complex patterns in large datasets, providing valuable insights.

Cybersecurity

ML models in .NET analyze data on security threats and attacker behavior, autonomously enhancing security measures.

Virtual Assistants and Chatbots

AI-driven chatbots in ASP.NET and virtual assistants improve user engagement and customer satisfaction while reducing the need for extensive support staff.

Natural Language Processing (NLP) in .NET apps

Embedding AI and ML in .NET applications allows them to understand human language, processing text and voice data using computational linguistics and deep learning techniques.

Advanced Image and Video Processing

Enterprises implement .NET apps with deep learning technologies and CNN models for advanced image and video processing, including enhancement, restoration, segmentation, compression, and object detection.

For AI-powered platforms, consulting with experts can ensure project viability and success.

AI App Development with .NET: Getting Started

To start AI app development, it’s crucial to leverage the robust tools and capabilities of .NET. By integrating ML models into .NET platforms, you can transform data into actionable insights and drive innovative solutions.

Solid Technical Foundation for AI Implementation

Development Environment: Ensure you have the latest version of Visual Studio or your preferred IDE installed. Install the .NET SDK and relevant extensions for machine learning, such as ML.NET Model Builder. These tools will streamline the process of building and training AI models in the .NET ecosystem.

Data Preparation and Management: Collect and structure your datasets carefully. Ensure your data is clean, normalized, and relevant to your use case. Techniques like data augmentation, feature engineering, and normalization are essential for this process.

Building and Training Models: Define your machine learning pipeline using ML.NET’s API. Select appropriate algorithms, configure hyperparameters, and specify data transformations. Training the model is an iterative process, continuously refine your pipeline based on performance metrics.

Evaluating and Tuning Models: After training, evaluate the model using ML.NET’s evaluation metrics. Techniques such as cross-validation and hyperparameter tuning can enhance model accuracy and robustness.

After the models are validated, they are ready to be integrated into a project. A professional .NET AI services provider can assist with this process.

Introduction to ML.NET and Its Capabilities

ML.NET, introduced in 2018, is a powerful framework designed to bring machine learning capabilities to the .NET ecosystem. It is cross-platform and open-source, making it accessible and ensuring it can be used across various operating systems and environments.

Features and Capabilities of ML.NET

Automated Machine Learning (AutoML)

ML.NET includes AutoML, which simplifies the model-building process by automatically exploring different algorithms and hyperparameters to find the best model for a given dataset.

Integration with Existing .NET Applications

One of the standout features of ML.NET is its seamless integration with existing .NET applications. Once a model is trained, it can be easily incorporated into any .NET application, whether it’s a web app, desktop app, or mobile app. This integration is facilitated through simple APIs that allow for predictions and model updates.

Interoperability with Other Frameworks

ML.NET supports interoperability with other popular ML frameworks like TensorFlow and ONNX. This means developers can leverage pre-trained models from these frameworks or export ML.NET models to be used elsewhere.

Extensive Model Evaluation and Improvement Tools

After training a model, ML.NET provides comprehensive evaluation metrics to assess its performance. These metrics help to evaluate how well the model is performing and identify areas for improvement. The framework also supports techniques for model refinement, such as cross-validation and hyperparameter tuning, to enhance model accuracy and reliability.

Scalability and Performance Optimization

ML.NET is designed to handle large datasets and high-throughput scenarios. It provides various optimization techniques including support for distributed training, hardware acceleration, and other performance enhancements.

Robust Documentation and Community Support

ML.NET benefits from extensive documentation and a strong community. Developers have access to a wealth of tutorials, examples, and forums which accelerate the learning curve and enable developers to solve problems more effectively.

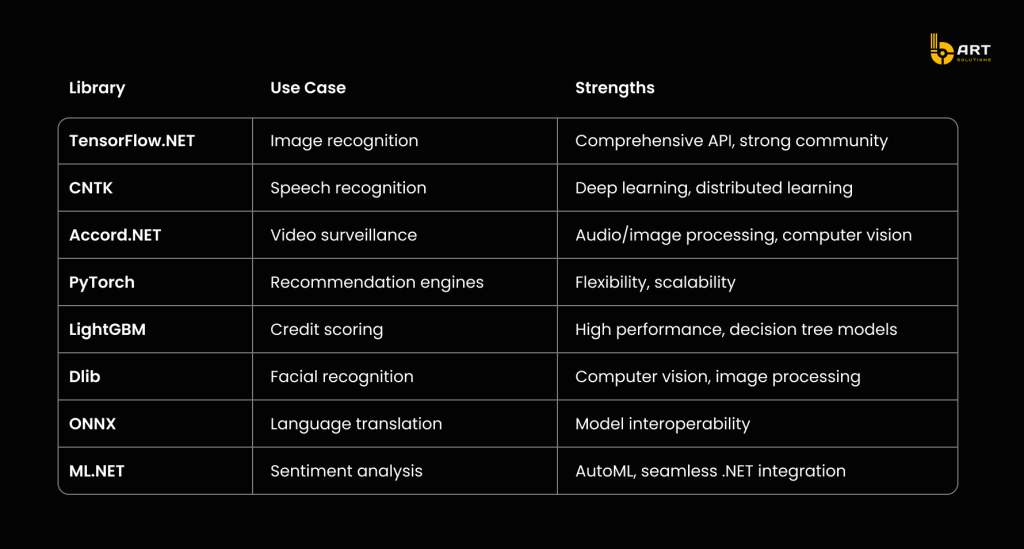

AI and ML Libraries and Frameworks Compatible with .NET

Choosing the right AI and ML libraries and frameworks for a .NET project depends on the specific needs, such as the desired type of ML model or the necessary AI features.

Comparison of AI and ML Libraries in .NET

TensorFlow.NET

TensorFlow, developed by Google, is a popular ML framework that allows the creation and training of ML models. TensorFlow.NET (TF.NET) provides .NET bindings, enabling the use of the full TensorFlow API in C#. This makes it easier to build, deploy, and train machine learning models within the .NET environment.

CNTK (Cognitive Toolkit)

Created by Microsoft, CNTK (Cognitive Toolkit) is a powerful deep learning toolkit for neural network representation. It supports various model types like Feed-Forward DNS, Convolutional Nets, and Recurrent Networks. CNTK is suitable for adding commercial-grade distributed deep learning to .NET applications and is available under an open-source license.

Accord.NET

Accord.NET is a comprehensive machine learning framework written in C#. It provides tools for audio and image processing, computer vision, signal processing, and statistics. Accord.NET recently merged with the AForge.NET project, offering a unified API for building machine learning models in .NET applications.

PyTorch

PyTorch is an open-source deep learning framework known for its flexibility and scalability. While primarily a Python library, it can be used with .NET applications to implement AI and ML features. PyTorch supports dynamic ML algorithm development and integrates high-level technologies like Tensor Computation and NumPy.

LightGBM

LightGBM, part of Microsoft’s DMTK project, is an efficient gradient boosting framework for machine learning. It supports decision tree algorithms for classification, ranking, and other ML tasks. LightGBM is used for building and deploying ML models in .NET applications, offering high performance and scalability.

Dlib

Dlib is an open-source C++ toolkit with numerous ML algorithms and tools for building complex software solutions. It is often used in .NET applications for machine learning and computer vision tasks, including image processing and facial recognition.

ONNX

The Open Neural Network Exchange (ONNX) is a cross-platform, open-source format for representing machine learning models. ONNX allows developers to integrate ML models into .NET applications, including mobile apps. It supports AI algorithms for C# .NET Core console applications and works seamlessly with ML.NET.

ML.NET

ML.NET is a popular library for building custom ML models using C# and F# within the .NET ecosystem. It offers tools for designing, training, and deploying high-level ML models, including support for AutoML. ML.NET integrates with other ML frameworks like TensorFlow and ONNX, enabling scenarios such as sentiment analysis, product recommendations, price predictions, customer segmentation, object detection, and fraud detection.

Building AI Products with .NET

Machine learning in .NET offers a robust platform for building sophisticated AI models. The key to unlocking this potential lies in understanding the foundational algorithms. These algorithms, ranging from linear regression to complex neural networks, form the backbone of predictive analytics and intelligent decision-making.

Implementing Supervised and Unsupervised Learning Models Using ML.NET

Supervised Learning: Trained using labeled data, effective for tasks where historical data can inform future outcomes.

Classification: Categorizes data into predefined classes (e.g., spam detection, image recognition).

Regression: Predicts continuous values (e.g., housing prices, stock market trends).

Unsupervised Learning: Finds hidden patterns and structures in the input data without requiring labeled data.

Clustering: Groups data into clusters based on similarity (e.g., customer segmentation).

Anomaly Detection: Identifies unusual data points, crucial for fraud detection and network security.

Case Studies of Successful AI Implementations in .NET Applications

The versatility and power of .NET, combined with ML.NET, open up numerous possibilities for successful AI implementations across various industries. Here are some examples illustrating how different industries could benefit from this integration:

Financial Services: Predictive Analytics for Credit Scoring

A financial services company leverages ML.NET to develop a sophisticated credit scoring system. By training a supervised learning model on historical loan data, the company accurately predicts loan defaults and assesses credit risk.

Retail: Personalized Customer Recommendations

A retail chain utilizes ML.NET to enhance its customer recommendation engine. Using unsupervised learning techniques, such as clustering, the company analyzes purchasing behavior to segment customers into distinct groups.

Healthcare: Early Disease Detection

A hospital network applies ML.NET to develop an early disease detection system. By training classification models on patient records and medical images, the system identifies patterns indicative of early-stage diseases, such as cancer. Integration with the hospital’s .NET-based electronic health record (EHR) system ensures scalability and security, maintaining patient confidentiality.

Manufacturing: Predictive Maintenance

A manufacturing company implements a predictive maintenance system using ML.NET to minimize downtime and extend the life of machinery. By analyzing sensor data and historical maintenance records with anomaly detection models, the system predicts equipment failures before they occur. .NET-based control platforms facilitate real-time monitoring and decision-making.

E-commerce: Fraud Detection

An e-commerce platform harnesses the power of ML.NET to create a robust fraud detection system. Using a combination of supervised and unsupervised learning models, the platform analyzes transaction data to detect fraudulent activities. The system identifies unusual patterns and flags suspicious transactions for further review, enhancing security and instilling greater trust among users.

Energy: Smart Grid Optimization

A utility company uses ML.NET to optimize its smart grid operations. By applying regression models to forecast energy demand and clustering algorithms to manage load distribution, the company improves energy efficiency and reduces operational costs. AI-driven insights enable better resource allocation and enhance grid stability. Integration with the company’s .NET-based management systems provides real-time analytics and facilitates responsive adjustments to energy distribution.

Integrating of Pre-Trained AI Models in .NET Apps

API Integration

REST APIs: An AI model can be deployed as a REST API service and integrated in a .NET application. This allows the app to send data to the API and receive predictions in real time.

csharp

using System.Net.Http;

using System.Text;

using System.Threading.Tasks;

var client = new HttpClient();

var content = new stringContent(jsonData, EncodingUTF8, "application/json");

var response = await client.PostAsync("https://api.example.com/predict", content);

var result = await response.Content.ReadAsStringAsync();

Containerization: Use Docker to containerize AI models, then deploy them as microservices. This allows scaling individual components independently and ensures better resource management.

shell

docker build -t ai-model .

docker run -d -p 5000:80 ai-model

Embedding AI Models

Direct Embedding: Integrate pre-trained models into a .NET application directly by converting them into a compatible format and embedding the inference logic.

csharp

var model = LoadModel("model.bin");

var result = model.Predict(new ModelInput {Feature1 = value1, Feature2 = value2 });

Cloud-basd AI Services with .NET

Azure Cognitive Services: Utilize Azure’s suite of pre-built AI services to add capabilities like vision, speech, language, and decision-making to your .NET applications.

csharp

using Microsoft.Azure.CognitiveServices.Vision.ComputerVision;

using Microsoft.Azure.CognitiveServices.Vision.ComputerVision.Models;

// Initialize the Computer Vision client

var client = new ComputerVisionClient(new ApiKeyServiceClientCredentials("your_api_key"))

{

Endpoint = "your_endpoint"

};

// Analyze an image URL

var imageUrl = "https://example.com/image.jpg";

var features = new List<VisualFeatureTypes?> { VisualFeatureTypes.Description };

var analysis = await client.AnalyzeImageAsync(imageUrl, features);

// Output the description of the image

Console.WriteLine(analysis.Description.Captions[0].Text);

Best practices for AI in .NET apps

Efficient Data Handling

Data Caching: Cache data to reduce I/O operations and speed up model inference.

Batch Processing: Process data in batches to optimize memory usage and processing time.

Model Optimization:

Quantization: Reduce model size and improve inference speed by converting model weights from floating-point to integer.

Pruning: Remove redundant weights in the model to make it faster and more efficient.

Parallel Processing:

Multi-threading: Utilize multi-threading to run multiple inferences simultaneously, leveraging .NET’s System.Threading.Tasks library.

csharp

Parallel.ForEach(dataBatch, item =>

{

var result = predictionEngine.Predict(item);

// Process result

});

Hardware Acceleration

GPU Utilization: Leverage GPUs for heavy computational tasks using libraries like TensorFlow.NET that support GPU acceleration.

Real-World Challenges and Solutions in Deploying AI-Powered Features in .NET Applications

Deploying AI-powered features in .NET applications comes with its set of challenges, but with careful planning and strategic solutions, these obstacles can be effectively managed.

Data Privacy and Security

One of the foremost concerns when integrating AI models in .NET applications is ensuring data privacy and security. With AI applications often relying on large datasets, which may include sensitive information, maintaining data integrity and confidentiality is crucial. To tackle this, robust encryption methods should be employed both during data storage and transmission. Anonymization techniques can further safeguard user identities. Implementing secure protocols and regularly updating security measures can significantly mitigate risks, ensuring that your application complies with data protection regulations and maintains user trust.

Scalability

As AI models become more integral to application functionality, the ability to scale them efficiently is vital. Many AI applications need to handle increased loads and large datasets, which can strain resources if not properly managed. Leveraging cloud services like Azure Machine Learning and Azure Kubernetes Service (AKS) offers a robust solution. These platforms provide the infrastructure to scale your models dynamically, balancing loads and ensuring smooth operation even as demand fluctuates. Implementing load balancing and auto-scaling features ensures your AI-powered .NET application can grow seamlessly with user demand.

Model Maintenance and Updates

AI models require regular updates to remain effective and accurate. As new data becomes available, models need to be retrained and redeployed. This continuous cycle can be challenging without a structured approach. Setting up continuous integration and continuous deployment (CI/CD) pipelines for your AI models automates much of this process. By integrating automated retraining processes with updated datasets, you can ensure that your models evolve alongside your application’s needs. This approach not only keeps your models current but also minimizes downtime during updates.

Integration Complexity

Seamless integration of AI models into existing .NET applications can be complex, often requiring substantial changes to the codebase and workflows. To streamline this process, adopting well-defined APIs and a modular code architecture is essential. These practices facilitate easier integration and better maintainability. Conducting thorough testing is another critical step, as it ensures compatibility and performance stability. By isolating and addressing integration issues early, you can avoid costly fixes later in the development cycle.

Scaling and Deploying AI-Powered Apps

Strategies for Scaling AI-Powered Applications Using .NET Core and Azure Services

Scaling AI-powered applications effectively is crucial for handling increased loads and ensuring consistent performance. .NET Core and Azure provide a comprehensive ecosystem that supports various scaling strategies. Here are some specific methods to leverage these technologies for scalable AI solutions.

- Containerization with Docker: Package AI models into Docker containers to ensure consistency across different environments. Use Docker Hub or Azure Container Registry to store and manage these containers.

- Azure Kubernetes Service (AKS): Deploy containerized applications on AKS for automatic scaling and high availability. Configure AKS to adjust the number of pods based on traffic, ensuring efficient resource usage.

- Distributed Training with Azure Machine Learning: Use Azure ML’s distributed training capabilities to train large models across multiple nodes. This approach reduces training time and enhances model performance.

- Serverless Computing with Azure Functions: Implement Azure Functions for event-driven execution of AI models. This allows automatic scaling based on demand, reducing operational costs.

Deployment Considerations for AI Models in Cloud and On-Premises Environments

Deploying AI models involves a variety of considerations depending on whether the target environment is cloud-based or on-premises. Each environment has its own set of requirements and advantages.

AI deployment strategies in .NET

Cloud Deployment

- Azure Machine Learning: Deploy models using Azure ML’s managed endpoints, which handle load balancing and scaling automatically.

- Azure Container Instances: For simpler deployments, use ACI to run containerized models without managing underlying infrastructure.

- Security: Implement Azure Key Vault to manage secrets and credentials securely.

On-Premises Deployment

- Azure Stack: Use Azure Stack to bring cloud capabilities to your data center, ensuring compliance with data residency requirements.

- Docker and Kubernetes: Deploy models in Docker containers and manage them with an on-premises Kubernetes cluster for consistent operations across environments.

- Data Security: Ensure data encryption at rest and in transit, and comply with local regulations.

Monitoring and Optimizing AI Applications for Performance and Scalability in .NET

Maintaining optimal performance and scalability of AI applications requires continuous monitoring and strategic optimization. Using tools provided by Azure services and best practices in performance management can ensure that AI applications remain efficient and effective.

Here are strategies to monitor and optimize AI-powered applications in .NET.

Real-Time Monitoring

- Azure Monitor: Set up Azure Monitor to track application performance, resource utilization, and detect anomalies.

- Application Insights: Use Application Insights to collect telemetry data, create custom dashboards, and set up alerts for performance issues.

Performance Optimization

- Model Optimization: Use techniques like quantization and pruning to reduce model size and improve inference speed.

- Hardware Acceleration: Leverage GPUs or specialized hardware (e.g., FPGA) in Azure for faster model training and inference.

- Resource Management: Optimize memory and CPU usage by profiling the application and fine-tuning the code.

Continuous Improvement

- Automated Retraining: Implement a pipeline for continuous model retraining using Azure ML, incorporating new data to keep models up-to-date.

- Hyperparameter Tuning: Use Azure ML’s hyperparameter tuning to find the optimal settings for model training, improving accuracy and performance.

- Scalability Testing: Regularly perform load testing to ensure the application can handle increased traffic and scale accordingly.

Conclusion

Building AI-driven products with .NET marks a pivotal stage in software engineering. By following best practices and leveraging the robust tools and frameworks within the .NET ecosystem, developers can effectively build and scale smarter, more responsive, and efficient AI-driven applications that drive success in a dynamic market.

For expert AI and ML development, reach out to bART Solutions.