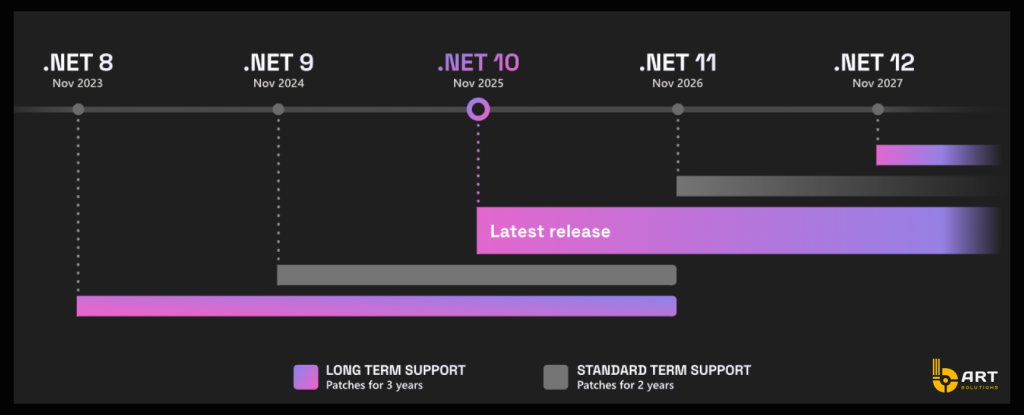

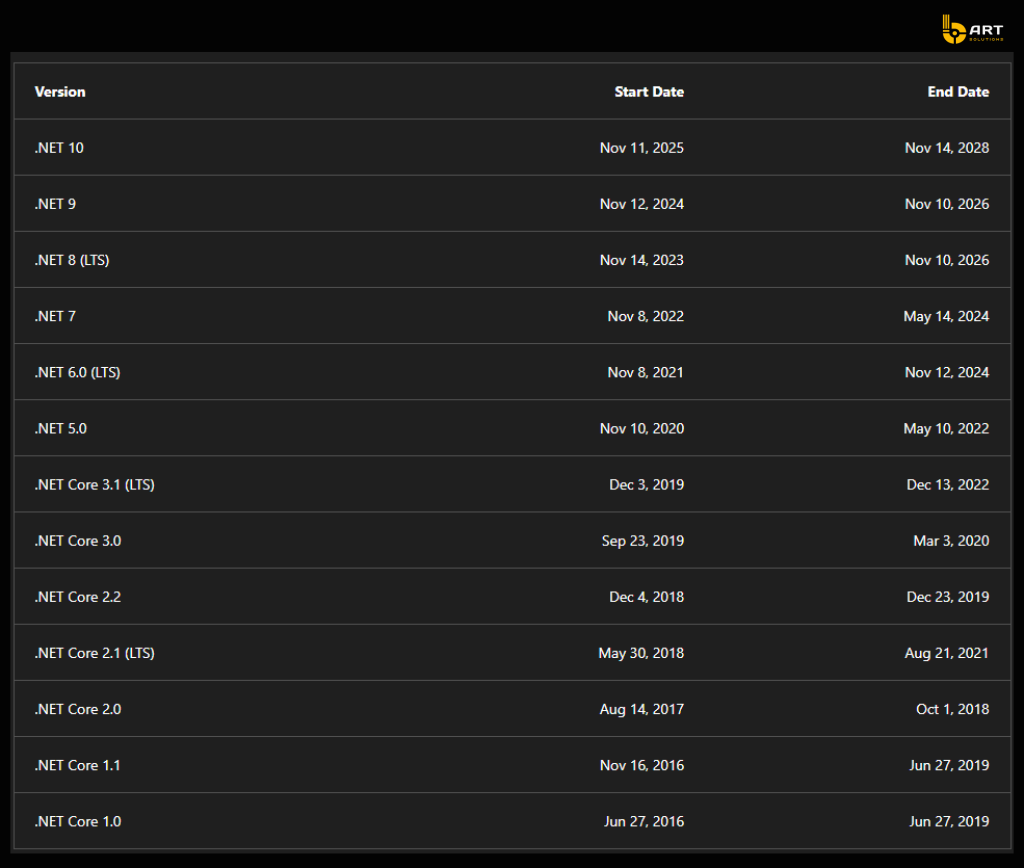

Modernizing .NET is no longer just about cleaning up technical debt or staying on a supported runtime. It is about preparing business-critical systems to support AI features, intelligent automation, retrieval-based experiences, and more demanding integration patterns without compromising security, reliability, or delivery speed. Microsoft’s current support policy makes that urgency concrete: as of February 2026, .NET 10 is the latest long-term support release, while .NET 8 and .NET 9 both reach end of support on November 10, 2026. .NET 10 remains supported through November 14, 2028. That gives companies a limited but clear window to align modernization with broader platform and AI roadmaps.

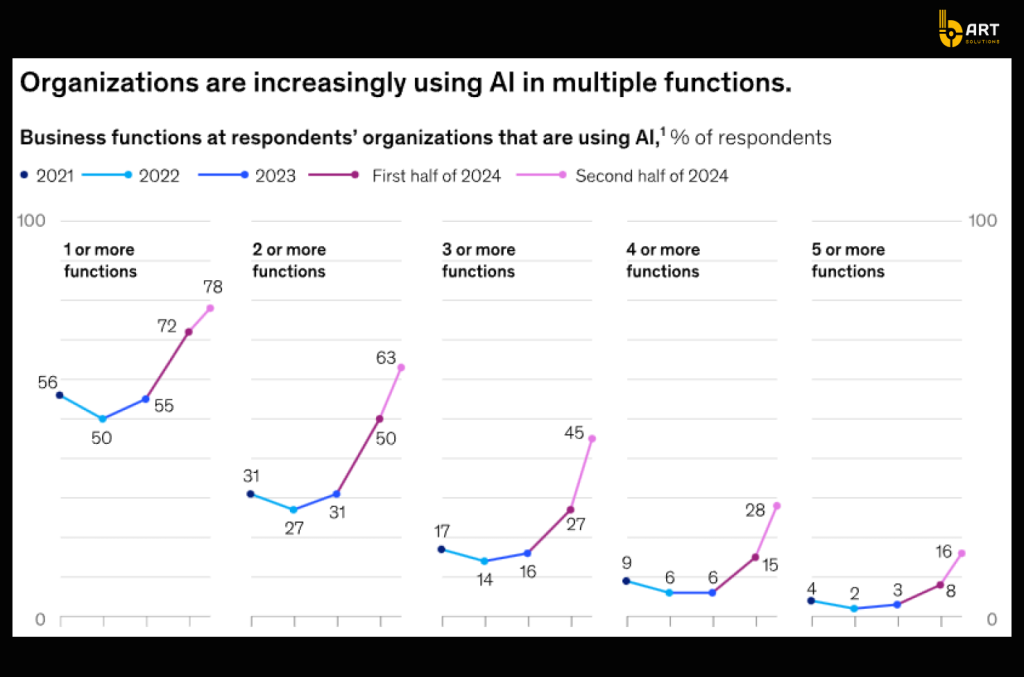

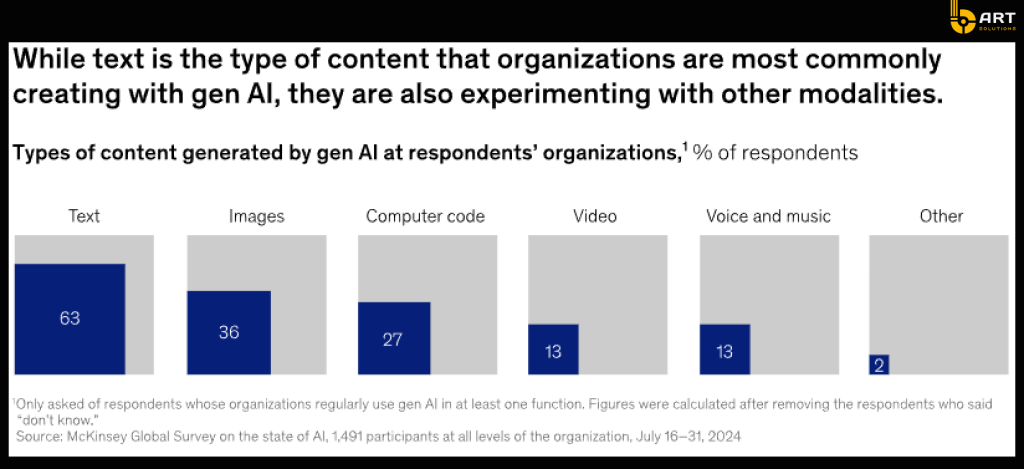

McKinsey’s 2025 global AI survey found that organizations are beginning to generate bottom-line impact not simply by deploying models, but by redesigning workflows, elevating governance, and embedding human validation into operating processes.

AI features increase pressure on application architecture, data quality, observability, security controls, and integration design. If the underlying platform is brittle, AI tends to magnify the weakness rather than solve it.

Why .NET modernization has become a business issue

A few years ago, many modernization projects were framed as technical hygiene. Today, modernization is tied directly to speed, resilience, and future product capability.

The first reason is support and risk. Running unsupported or soon-to-expire runtime versions increases exposure to security and compliance issues, but it also narrows your options for adopting current Microsoft tooling. The official .NET lifecycle now places .NET 10 as the current LTS release, with .NET 8 and .NET 9 on a much shorter remaining runway. For enterprise teams planning AI pilots, copilots, intelligent search, or document automation in the next 12 to 24 months, delaying the platform refresh only makes the later move more disruptive.

The second reason is architectural fit. Modern AI-enabled enterprise apps are rarely single-tier systems. They usually involve API layers, orchestration, model access, telemetry, search or retrieval services, asynchronous processing, policy enforcement, and external integrations. Microsoft’s .NET roadmap reflects exactly that pattern. .NET Aspire is positioned as a platform for building observable, production-ready distributed applications, with built-in OpenTelemetry support for logs, traces, and health checks. In practical terms, that means Microsoft is actively shaping .NET for environments where multiple services must work together cleanly and be diagnosable in production.

The third reason is economic. Legacy application estates often tolerate hidden inefficiencies because they still “work.” But AI workloads are less forgiving. Weak service contracts, missing telemetry, tightly coupled modules, and fragmented data models quickly translate into slower feature delivery, expensive debugging, duplicated processing, or inconsistent outputs. McKinsey’s research is useful here because it reinforces a reality many engineering leaders already feel: value from gen AI comes when organizations rewire the surrounding operating model, not when they simply plug a model into an old workflow.

What makes a .NET application AI-ready

An AI-ready .NET application is not just an app that can call a model endpoint. It is an application that can support AI-driven capabilities in a way that is secure, observable, maintainable, and commercially viable.

That starts with a current platform foundation. Microsoft’s own .NET 10 announcement describes the release as more productive, modern, secure, intelligent, and performant, and specifically notes that the platform is designed to help teams “easily infuse” applications with AI. The official AI for .NET guidance now covers model integration, chat, agents, embeddings, custom data, and broader ecosystem tooling. This is a strong signal for enterprise buyers: current .NET is no longer just compatible with AI; Microsoft is treating AI as a first-class workload pattern for the platform.

The next requirement is a common AI integration layer. Microsoft.Extensions.AI is important here because it provides shared abstractions for chat clients, embeddings, tools, and other AI-related components. That may sound like a developer detail, but it has business value. Standardized abstractions reduce provider lock-in, simplify governance, and let teams apply middleware, logging, evaluation, or policy checks more consistently across different AI-powered features.

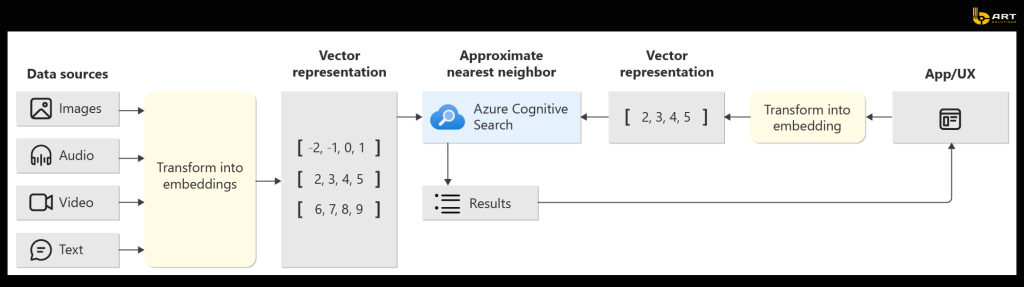

Another core capability is retrieval grounded in enterprise data. Most AI use cases that matter to enterprise buyers are not about producing creative text from scratch. They are about helping users find, interpret, summarize, or act on trusted company information. Azure AI Search now explicitly supports text, vector, and multimodal retrieval, and Microsoft documents its role in traditional and generative search scenarios, including vector indexing and hybrid search patterns. That makes it a practical building block for retrieval-augmented generation, internal copilots, document intelligence layers, and knowledge-driven support experiences.

AI-ready means governed. Azure AI Content Safety is designed to detect harmful user-generated and AI-generated content, while Microsoft’s broader Azure AI positioning emphasizes security, safety, and observability for enterprise AI applications and agents. For decision-makers, the takeaway is simple: if an AI-enabled feature touches customers, employees, regulated workflows, or brand-sensitive content, moderation and policy controls need to be part of the design, not a patch added after launch.

Where legacy .NET breaks under AI pressure

Most organizations do not discover they have an application foundation problem during an ordinary release. They discover it when they try to add a more demanding capability.

AI tends to expose four weak points fast.

The first is integration fragility. If business logic lives in a monolith with inconsistent APIs or tightly coupled dependencies, adding model calls, vector search, async processing, or external enrichment services becomes messy quickly. Instead of a clean pipeline, teams end up with bespoke connectors, fragile retry logic, and limited fault visibility. That slows down delivery and makes production incidents harder to explain.

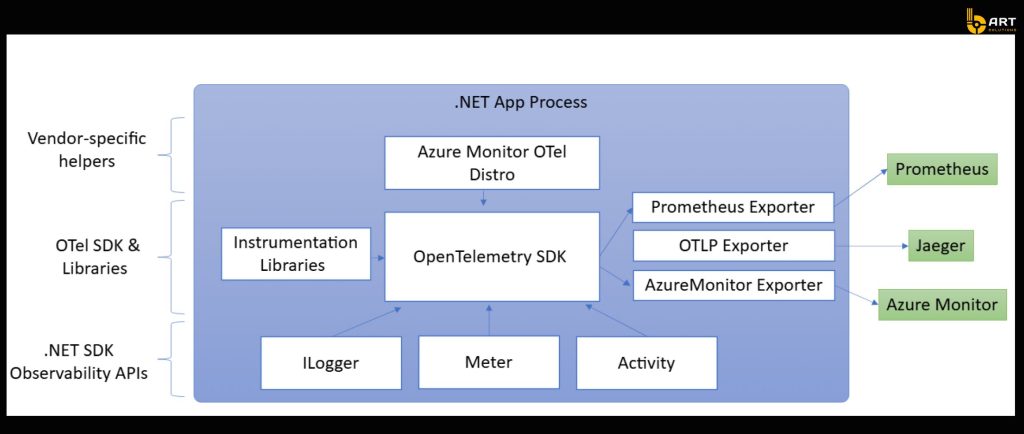

The second is poor observability. AI adds layers that are hard to debug without structured telemetry: prompts, retrieved context, model latency, tool usage, fallbacks, cache behavior, and user-facing responses. Microsoft’s .NET observability guidance is explicit that observability in distributed systems depends on logs, metrics, and traces, and that these signals should be collected continuously rather than only during debugging. If that telemetry is weak, teams struggle to distinguish a model issue from an integration issue or a data issue.

The third is security posture. AI applications have a wider attack surface than conventional CRUD systems. OWASP’s Top 10 for LLM Applications now highlights risks such as prompt injection, insecure output handling, model denial of service, training data poisoning, and supply chain vulnerabilities. Enterprise modernization efforts that ignore these AI-specific risks are setting themselves up for expensive rework later.

The fourth is data fragmentation. If a company’s most valuable operational knowledge is spread across disconnected systems, shared drives, email threads, and custom databases with inconsistent access rules, even the best model integration will underperform. AI is not magic; it is highly sensitive to the quality, structure, and accessibility of the information it depends on.

A practical modernization path

- The first step is to establish a supported runtime baseline. Inventory which applications still run on legacy frameworks or on .NET versions nearing end of support, and prioritize systems based on business criticality, integration centrality, and expected AI demand. That reduces immediate risk and creates a platform baseline for future work. Microsoft’s published lifecycle dates make this step easy to justify at leadership level because the support deadlines are not theoretical.

- The second step is to improve architectural fitness rather than chasing “microservices everywhere.” In practice, that means clarifying service boundaries, cleaning up APIs, reducing needless synchronous dependencies, and introducing more resilient processing patterns where appropriate. .NET Aspire is relevant here not because every company needs its full platform model immediately, but because it reflects a code-first, distributed, observable application approach that aligns with the way enterprise AI features are increasingly built.

- The third step is to invest in observability before AI features hit production. OpenTelemetry has become a key part of Microsoft’s .NET and Azure monitoring story, and Azure Monitor Application Insights now supports OpenTelemetry-based data collection as a standard path for collecting telemetry across services. For AI workloads, that observability layer is not optional. You need visibility into performance, failures, request chains, and increasingly into AI-specific flow behavior if you want to manage cost and user trust.

- The fourth step is to add AI as a governed capability layer. Microsoft’s .NET AI guidance, Azure OpenAI platform materials, and Foundry documentation all point toward a stack where models, tools, retrieval, orchestration, tracing, and evaluation are managed as part of an application platform rather than scattered across experimental code. Azure’s own positioning emphasizes support for automating complex workflows, managing dynamic scenarios, and building and governing AI apps and agents. That makes modernization a strategic enabler: once the foundation is in place, teams can add targeted AI use cases far faster and with less risk.

Business use cases this unlocks

A modernized .NET estate is much better positioned to support intelligent document workflows, such as extracting and validating data from contracts, invoices, claims, or onboarding records. It can support internal copilots grounded in enterprise documentation through retrieval-based search. It can support support-desk summarization, case intelligence, next-best-action recommendations, and operational assistants that pull from governed company data rather than producing generic outputs. Microsoft’s Azure AI positioning and .NET AI tooling make these scenarios much more achievable than they were even two years ago, but they only work reliably when the application foundation can support integration, telemetry, and policy controls.

There is also a cost argument. Azure OpenAI’s Batch API documentation notes that large-scale asynchronous processing can run at 50% less cost than global standard pricing for qualifying batch scenarios, with separate quota designed not to disrupt online workloads. That is a useful example of how modern architecture choices affect AI economics. Teams with a clean, modular platform can choose the right processing mode for the business case instead of forcing every workload through a real-time pattern.

Best practices for AI-ready .NET modernization

1. Treat modernization as a business initiative, not just a technical upgrade

.NET modernization should support a broader business goal: faster product delivery, stronger operational resilience, and readiness for AI-driven capabilities. If modernization is handled only as an infrastructure task, companies often end up with a newer runtime but the same architectural and operational bottlenecks.

2. Start with the systems that carry the highest business risk

Not every application needs to be modernized at once. Prioritize systems that are closest to revenue, customer experience, compliance, or critical internal operations. These are the applications where outdated architecture creates the greatest delivery risk and where AI-enabled improvements are most likely to produce measurable business value.

3. Align modernization plans with Microsoft’s support lifecycle

A practical starting point is to review your .NET estate against Microsoft’s support deadlines and identify which applications are already unsupported or approaching end of support. This helps leadership prioritize modernization based not only on technical debt, but also on security, compliance, and long-term platform stability.

4. Fix observability before adding AI features

AI workloads are harder to manage than traditional business logic. They introduce more dependencies, more points of failure, and more complex behavior in production. That is why strong logging, metrics, and distributed tracing should be in place before AI features are deployed. Without observability, teams struggle to understand failures, performance issues, or cost drivers.

5. Build AI on top of governed enterprise data

Enterprise AI becomes useful when it works with trusted business data, not when it generates generic output. That means modernization should include better access to internal knowledge, clearer permissions, stronger retrieval quality, and more disciplined data governance. In most real-world scenarios, the quality of connected enterprise data matters more than the model itself.

6. Do not layer AI onto broken workflows

If an existing workflow already suffers from fragile integrations, inconsistent APIs, or unclear ownership, AI will usually amplify those weaknesses instead of fixing them. The better approach is to remove structural friction first, then introduce AI where it can improve accuracy, speed, or decision support in a controlled way.

7. Standardize AI implementation patterns across teams

As AI use cases expand, inconsistency becomes expensive. Leadership should encourage common patterns for model integration, telemetry, fallback logic, human review, and safety controls. This reduces duplication, simplifies governance, and makes it easier to scale successful use cases across products and departments.

8. Build governance into the architecture from day one

AI governance should not be treated as a final review step before launch. Security controls, content moderation, access policies, monitoring, and output validation need to be part of the application design itself. This is especially important for customer-facing experiences, regulated industries, and business-critical workflows.

9. Measure success by readiness

The goal is not simply to move applications to a newer .NET version. The real measure of success is whether the business can launch AI-powered capabilities faster, more safely, and with greater operational control than before. That is what turns modernization from a maintenance project into a strategic investment.

Final thought

Modernizing .NET for AI-ready enterprise apps is not about chasing hype or rebuilding everything from scratch. It is about putting the business on a platform that can support the next wave of product and operational capabilities without turning every new initiative into a high-risk integration exercise.

The companies that get value from AI will not be the ones that simply bolt models onto old systems. They will be the ones that modernize the foundation, improve observability, govern data access, and treat AI as part of the enterprise application architecture.

With strong expertise in .NET development, bART Solutions helps organizations modernize legacy applications, improve system performance. Contact us and let’s create a reliable foundation for AI-driven innovation together!